How Object Detection Models Work Internally

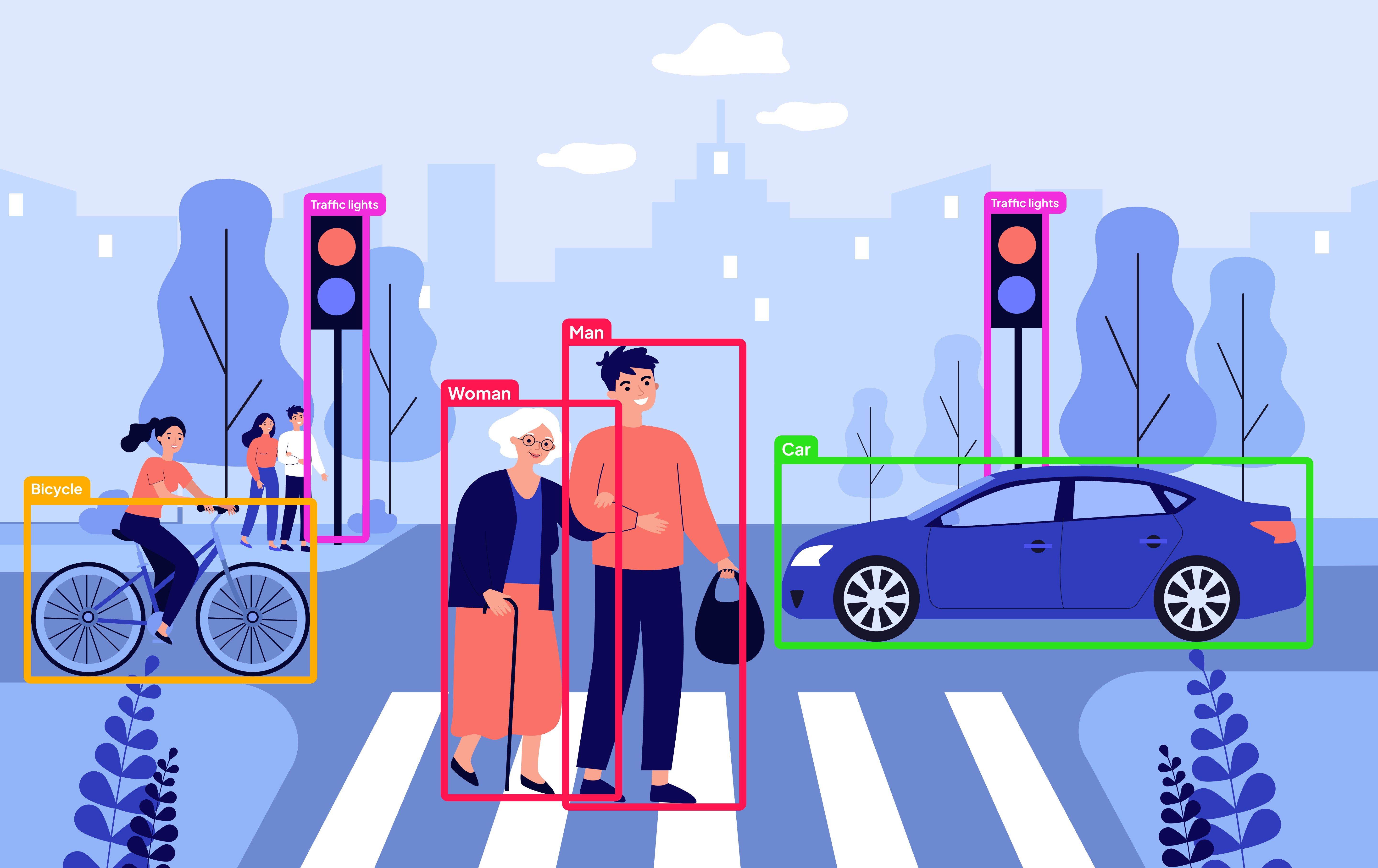

Object detection models take an image and return bounding boxes with labels. While the output looks simple, there is a well-structured pipeline inside the model that handles perception, reasoning, and localization. This article breaks that pipeline down in a practical and intuitive way.

The goal of object detection

An object detection model answers two questions at the same time:

- What objects are present?

- Where are they located?

To do this, the model must understand visual patterns, reason about context, and predict precise locations.

Step 1: Image preprocessing

The model cannot work directly with raw images. The image is:

- Resized to a fixed resolution

- Normalized (pixel values scaled)

- Converted into a tensor

At this stage, the image is just numbers arranged in a structured grid.

Step 2: Feature extraction (learning to see)

The backbone network extracts visual features.

CNN-based backbones

- Use convolution layers

- Learn edges → textures → shapes → object parts

- Common backbones: ResNet, CSPDarknet

Transformer-based backbones

- Start with a CNN backbone

- Convert features into tokens

- Use self-attention to model global relationships

Output:

Feature maps = visual understanding of the imageStep 3: Context and relationships

Understanding objects requires context:

- A wheel near a metal body suggests a car

- A face near a body suggests a person

Transformers excel here by allowing all regions of the image to attend to each other. This helps with:

- Occlusion

- Overlapping objects

- Small objects in cluttered scenes

Step 4: Object candidates

Different models generate object candidates differently.

Anchor-based detectors (YOLO, SSD)

- Predefined anchor boxes

- Model adjusts anchors to fit objects

- Produces many overlapping predictions

Anchor-free detectors (DETR, DINO)

- Use fixed object queries

- Each query predicts one object

- Clean, structured outputs

This shift greatly simplifies detection logic.

Step 5: Bounding box prediction

For each object candidate, the model predicts:

x_center, y_center, width, heightThese values are relative to the image size and later converted to pixel coordinates.

The model learns object geometry implicitly during training.

Step 6: Classification

Each predicted box also includes a class probability distribution:

Person: 0.94

Dog: 0.03

Chair: 0.01The highest score determines the final label.

Step 7: Training with ground truth

During training, predictions are matched with ground-truth boxes.

Modern approach (DETR / DINO)

- Uses Hungarian matching

- One-to-one matching between predictions and targets

- Eliminates duplicate detections

Loss is computed on:

- Classification error

- Bounding box distance

- Box size mismatch

This allows true end-to-end training.

Step 8: Inference time behavior

At inference:

- Low-confidence predictions are filtered

- Remaining boxes are returned directly

Transformer-based models do not require Non-Max Suppression (NMS), unlike older anchor-based detectors.

CNN vs Transformer detectors

| Aspect | CNN-based | Transformer-based |

|---|---|---|

| Anchors | Required | Not used |

| NMS | Required | Not needed |

| Context | Local | Global |

| Training | Heuristic-heavy | End-to-end |

| Output stability | Medium | High |

Why modern detectors feel simpler

The internal pipeline is cleaner:

- No hand-tuned anchor boxes

- No post-processing heuristics

- Stable predictions

This makes models like DINO easier to train, debug, and deploy.

Mental model to remember

Think of object detection as:

- Seeing visual patterns

- Understanding relationships

- Asking if objects exist

- Answering with class + box

Final thoughts

Object detection models do not draw boxes randomly. They learn structured visual reasoning through layered representations and optimized matching strategies. Understanding this internal flow makes it easier to choose, tune, and deploy the right detection model for real-world problems.

This is an excerpt from Abdulvahab Shaikh's How Object Detection Models Work Internally article. I highly recommend you give it a read!